What is Crawl Budget and How to Optimize It?

You have to wait for search engines to discover you to make your website stand out, right? Actually, if you look at it, this is really important. Because your target audience makes their searches through search engines and they acquire new habits through the sites they come across here. Hey, then it’s really important to understand how search engines work and speed up your indexing process by providing easy-to-browse pages that they can crawl. So, does all this have anything to do with the crawling budget? What is your crawling budget and what should you consider to make your site stronger in a short time? What are the things you need to pay attention to in order to get Google to crawl more of your site, to be noticed faster and to be visible? Moreover, how does crawling behavior occur and what should it mean to you? Start exploring now.

Screpy experts have prepared a very detailed guide for you. In this detailed guide, we will examine how the crawler works, what the crawling budget means, and which URLs Google prefers to crawl. In this way, you will be able to highlight your page by crawling it at a high rate in a short time. If you are ready, we explain the formula to make your project stand out step by step in a short time.

What is the crawling budget?

The crawl budget does not define a specific financial fee. This is a more figurative definition. Crawling budget is a term that tells you how many pages Google crawls on your site in a given time period. The number of pages Google crawls in a day can be quite variable: five, fifteen hundred, or five hundred thousand. All these numbers are understandable and possible numbers.

So what should the crawling budget mean for us? Let’s put it this way, Google must index your page and crawl it for it to appear in the SERP. If the number of pages crawled on a daily basis exceeds the current number of pages on your site, your site still has a number of pages that cannot be crawled and therefore not indexed by Google. This gives you an important message that you need to highlight these pages and present them to Google.

Crawling Budget and SEO: How Are They Related?

Above, we told you something very important: Your sites need to be crawled in order to be shown in the SERP, and the mismatch between the crawling budget and the number of pages on your site means pages that cannot be shown in the SERP. Hey, that means you won’t be able to get organic traffic from these pages and unfortunately you won’t be able to rank for the keywords these pages are targeting.

Hey, now you understand the relationship between crawling budget and SEO much better, right?

So, how much does this issue need you to worry about? Is there anything you can do for the crawling budget? Well, let’s put it this way. Google is really good at crawling with its current version. Every day, Googlebots crawls millions of pages and changes their ranking, adding them to the index or defining various ranking penalties for pages. So you don’t have to worry too much about the crawling budget. What you need to do is do the basic things that Google requests for your pages correctly and wait for Google to crawl your page.

BONUS: Of course, it’s really important that you optimize for crawlability for every page on your site. For example, if you are managing a large page, your page has around 10,000 pages, and you are going to be adding many additional pages at once, it may be a good idea to check your crawling budget to see if these pages can be indexed soon.

So, what are the visible factors that negatively affect the crawling budget and thus put your SEO processes in trouble? Let’s just say it right away: Using a lot of redirects on your pages. Doing so will reduce your page speed and power in the eyes of Google, as well as lower your crawling budget. In addition, if you want to take a look at the page speed optimization processes, you can click on the link.

When Is Crawling Budget Critical?

You don’t have to worry about the crawling budget for your popular pages during the indexing and visibility processes. In order for this concept to enter your life, it is expected that you have just added a large number of pages to your site. Pages that are newer, that are not sufficiently associated with other web pages and in-site link building efforts, and that do not need to be crawled frequently, may be more affected by the crawling budget problem.

There’s one more thing: If you’ve just opened your site and have created a lot of pages to crawl, the crawling budget will still be a problem for you. Because while you have to wait for Google to recognize your site and crawl the homepage, you are telling Google to crawl dozens of pages. This means accepting that you wait longer than necessary and that some of your pages are never seen. While you wait for your page to be indexed, Google will be unsure if your pages are worth it. Therefore, even for crawling, you will have to wait a while, while the indexing process will require an even longer process. All these will cause you to delay SEO work.

Especially if you have updated many of your pages in a short time, if you want all these pages to be indexed with their updated versions, the crawling budget will interest you. Because, especially on very large and multi-page sites, you may have to wait longer than you think for the same page to be crawled repeatedly.

Can Crawl Activity Be Checked?

We talk about the crawling budget so much, we say that crawling is a must to stand out on Google, so is there a way to measure or track this activity? Hey, is everything going to be a secret forever?

Of course no. Tracking crawling activity is really easy for SEOs who are used to using Google Search Console. You can review the crawling of all your pages in a short time by viewing the Crawl Stats Report on the console. In this context, let’s give a little bonus information: Did you know that Screpy works integrated with Google Search Console?

In general, the information you will see on the Crawling report page is actually quite detailed. For example, if your site has subdomains, you can see how many crawling requirements there are for each of them, examine the trend for these pages, and examine whether there is an imbalance between the required crawling activity according to the crawling budget, from the “problems” tab.

Google Search Console can also give you detailed information about crawl requests breakdown. It is possible to obtain detailed statistics on By response breakdown, by file type breakdown, by purpose breakdown and by Googlebot type breakdown. You can examine the pages that give the name response codes related to crawling, and then with the help of Google Search Console, you can examine the reason why each page gives an error. Common crawling response codes are as follows:

- robots.txt not available

- Unauthorized (including codes 401 and 407)

- Server error (5XX)

- Other client error (4XX)

- DNS unresponsive

- DNS error

- fetch error

- Page could not be reached

- Page timeout

- Redirect error

- other error

Snipped Errorrs

Each of the above errors may be specifically causing a page not to be crawled by Googlebots. For example, sometimes a snippet you add to your page source code can cause Googlebots not to crawl your page, but to ignore it. Although this is useful on pages that you do not want to highlight, such as the Privacy Policy, if you accidentally place such snippets on popular pages or if such a snippet is installed in the Javascript library you use, your page will not be updated and will have problems in the indexing process.

Server Problems

Similarly, various crawling problems may occur due to the server not working with sufficient performance. A higher number of crawlability (overcrawling) than necessary, and not crawling is also a problem for your site. Therefore, you need to fix these types of problems in line with the recommendations.

Redirect Problems

Finally, another most common problem is having too many redirects on a specific page. If you are already someone who wants to keep the user experience strong, hosting a large number of redirects is a great alternative as it will reduce page speed. In addition, this will negatively affect your SERP visibility as it will also affect crawling.

Explore More: Crawling History on Google Search Console

Google Search Console is a very useful tool for tracking crawlability. Because it gives you a very important clue to understand when the problem that prevents crawling activity occurs: You can examine the last time each page was crawled on the dashboard. Moreover, the received response code is sorted across each page. This, as you can imagine, simplifies optimization processes by telling you exactly at what interval the problem occurs.

What Is Included in Crawling Budget?

What do you need to include when trying to understand your site’s crawling budget? Almost everything that is in the scanning process!

- All URLs included in your site

- All of your site’s subdomains

- All server requests that occur on your site

- All AMP and m-dot pages (so your mobile pages are also included in your crawling budget)

- Language version pages with hreflang tag

- CSS and XHR requests

- Javascript requests

Not all of your crawling budget is spent by a single Googlebot. Multiple Googlebots may be crawling your site, making up your total crawl budget. By reviewing Crawling Statistics on Google Search Console, you can see the list of Googlebots and get detailed insights. In short, everything related to the crawling budget and more can be under your control.

Explore More: What is Technical SEO?

How to Improve Crawling Budget – Best Practices and More

If you want to increase the number of pages Google crawls in a certain time period, the things you can do are basically divided into two categories:

- Giving Google a reason to crawl your site more and often (for example, to be more user-oriented)

- Make it easier to crawl your site (enforce technical SEO requirements)

We’ve put together a to-do-list that includes both of the above. If you are ready, you can start the review.

Optimize Your Site Speed

Previously, we shared a detailed page speed optimization content with you so that you can do everything necessary about speed. Already, by using Screpy’s page speed tool, you can perform page-based detailed audit and identify speed shortcomings on each page and fix them with the help of tasks. In particular, detecting the pages you have problems with crawling through Google Search Console and improving the specific speeds of these pages in general will enable you to perform a target-oriented optimization process.

Internal Linking Works

If a web page has a lot of internal linking, Google may prioritize it in the crawling process, more easily convinced that that web page is powerful and authoritative. This means you can get your pages indexed without worrying about crawling budget. It’s quite natural for every page on your site to get links from at least one site: Many pages get links from blog pages or homepages. However, it will make things easier for the blog contents to link to each other or to the service pages.

When crawling a piece of content, Googlebots also goes to the internal links in the content and takes a look there. This increases the likelihood that the linked page will be crawled and indexed. The best way to allow Googlebots to meet these pages is to perform optimal internal linking.

Make Sure Your Website Architecture Is Strong

If you want to increase the probability of crawling any page in the internet world, what you need to do is make it popular. A popular page indicates a page with high link authority. To do this, you need the website architecture to not display certain pages as underrated. For this, you need to construct a flat architecture. Thanks to this type of architecture, each page will have the same level of authority as the links flowing to it and have the same chance of being crawled in Google’s eyes. This minimizes the possibility of certain pages being underestimated.

Orphan Pages Problem

While doing off-page SEO and link building work on your website, you may have noticed that one of the best ways to increase page authority is to have backlinks. If your site does not have an internal or external link to a specific page, that page is considered almost bare. Not giving any clues to Google to discover this page really means leaving crawling to chance. Therefore, it would be quite appropriate to worry about the crawling budget. If you want these types of pages on your site to be found and crawled by Google, you need to increase the areas where these pages are mentioned in the internet world. Internal and external links work just as well.

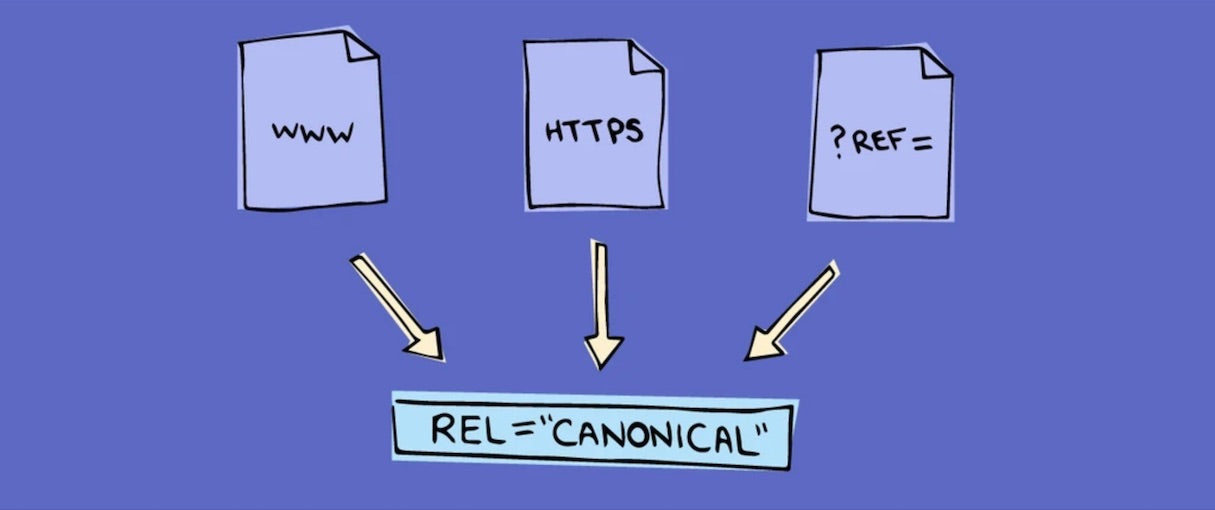

Duplicate Contents and Canonical Links

Let’s say what the primary requirement is when it comes to content marketing: Completely unique and powerful content. Such content is much more likely to be crawled by Google. If you have pages with renewed content, you may be wasting your crawling budget. Because what Googlebots will do is to scan this page and not index it because it is duplicate. Similarly, linking pages with duplicate content with canonical links will only increase the workload. All of these types of situations negatively affect the crawling budget. That’s why we recommend using duplicate content on a website to a minimum. Unique, powerful, SEO-friendly, and quality content. That’s exactly it!